It’s A Device, Not A Magic Black Mirror: Demystifying Transcoding

Read part 1 and part 2. I’m writing this on a Mac Powerbook. To my left is my iPhone 7. My wife, who has an iPhone 8, is texting me about a Dodgers shirt she wants to buy for our daughter. (The answer is yes, of course.) In front of me is my editing machine,...

I’m writing this on a Mac Powerbook. To my left is my iPhone 7. My wife, who has an iPhone 8, is texting me about a Dodgers shirt she wants to buy for our daughter. (The answer is yes, of course.) In front of me is my editing machine, a late 2009 iMac, on which I just edited my feature film. Also on my desk is an old 1080 small television with a Roku. Next to the coffee maker are two old iPhone 5’s my daughter thinks are now hers. Next to them, a pair of Amazon Kindles. Just on the other side of the wall is our new 4K UHD 55-inch Roku television where I watched the World Series and wept as I watched my Dodgers lose in crisp beautiful 4K. And in the corner is our old Samsung Smart TV that we’ll be moving into another room later this week. In our bedroom, we have an ancient 1080 television with a ROKU 2 connected to it as well as my wife’s PC laptop. We also have an Xbox One we used until this week for streaming video via Hulu, Amazon, and Netflix.

And none of them have identical streaming requirements.

So how do you plan for all of those devices? The answer…carefully.

An Example: Xbox One Streaming Requirements

At first glance, the requirements for Xbox One or a Samsung Smart TV might appear similar. But dig into the setting requirements. You’ll see they each have different profile levels required, distinct frame size needs, and other subtle differences. I can’t reveal all the details for each device, unfortunately. They’re covered under NDA’s. But we can discuss a few settings without too much trouble. If I’m clever, we can dive into a few without being specific to the device. But first let’s talk about a non-secret setting for the Xbox One which might cause a customer to need additional variants/flavors – and that is frame size.

Xbox One has two modes for video playback. One is full screen, taking up the entirety of your television. The other is “inset,” meaning the frame is part of the Xbox One menu but still expected to play content. A normal streaming player that takes a 1080 variant/flavor and simply scales it down to the size of the smaller video player frame when not in full screen. But Xbox One has specific requirements not to exceed a particular bitrate and standard definition frame size.

Profiles and Audio Settings

Another group of settings that tend to differ among devices are profile levels. As discussed in the Best Practices, one feature of video encoding is the ability to set certain profiles and levels: Baseline, Main and High. Some devices want to use only Baseline, others insist on all their variants using High Profile. This makes creating one simple set of variants/flavors challenging.

An area we don’t get into very often but that has fast presented itself as important is audio. Normal streaming audio settings are very straight forward. But an area that concerns many of the customers I’ve worked with recently is the use of multi-audio sources and multi-audio streaming. When a video is served up to a player the video and audio are stripped from the container and decoded separately essentially. (This is also why, when a streaming player is struggling to meet the needs of limited bandwidth, you might see your Netflix or Hulu show go out of sync.) This can allow multiple audios that can be switched on the fly to be accessible to the user.

But this also brings up the question of how those audio streams are getting ingested into the CMS/KMC. The flavor setup and process may be different if they are coming from individual audio-only sources or if one source contains all the needed languages. In either case, the formatting of those source files is critical and often requires custom variants/flavors to be generated.

In the following excerpt from The Best Practices of Multi-Device Transcoding (2018 Edition) additional settings for devices are discussed, audio is highlighted and the variant/flavor general settings are detailed:

AUDIO

Much of streaming video got its start in the need to digitize audio for CD usage in the 1980’s. In fact, the initial bitrate limitations for video had everything to do with the 1.5 Mbps data rate of CDs and CDRs.

Just like video, audio requires a codec and has specifications around bitrate and sample rate as well as track layout. And not all codecs are compatible with streaming, since the player must be able to decode them. Uncompressed audio is not used for streaming video, therefore some compression must be applied.

STREAMING AUDIO CODECS

AAC or Advanced Audio Codec, is the most common audio codec in use today in 2018. Its compression does a good job of preserving source file signal to noise ratio and overall fidelity. (Google’s WebM format uses a similar codec called Vorbis audio.)

HE-AAC (High Efficiency-AAC) is a more advanced version, with better compression and dynamic range.

AUDIO – SAMPLE RATE

A sample rate is similar to a video resolution in that it governs the quality of the audio in how closely it matches the source audio. Think of each audio sample as a line of pixels of video. The more lines of resolution, the sharper and clearer the image. Similarly, the more samples per second, the better the fidelity of the audio. The sample rate is measured in kHz for streaming video application. The standard audio sample rate for streaming video is 44.1 kHz or 44,100 samples per second. 44.1 kHz is the sample rate for CD audio, DVD, and Blu-Ray audio, in addition to being the standard for streaming over the internet.

A source file may use the higher quality 48 kHz.

Regardless of the codec, the bitrate and sample rates are common among the formats. So audio using AAC, HE-AAC, and Vorbis Audio will all use 44.1 kHz for streaming audio.

AUDIO CHANNELS

Each stream of audio can contain multiple channels. Each channel may contain different audio from each other depending on the use case and formatting.

STEREO: Normally we use 2-Channel Stereo audio Thismeans there is a left channel, meant for a left speaker, and a right channel, meant for a right speaker. Each of these channels typically contains the same information and is meant to create a balanced listening experience. These two channels will be embedded into 1 single audio stream and matrixed together. The player and playback device will then know to take part of the audio stream and send it to the Left Speaker and the other channel in the stream to the Right Speaker. Some sound engineers will take advantage of stereo’s 2 channels by “panning” sound FX or music from one channel to the other – making it seem as if the audio is moving along with the picture.(For example, panning the sound of a car passing through the frame left to right to match the left right movement of the on screen image.)

MONO: Older content and some UGC (user generated content) shot on SD video devices like older smart phones might use Mono, or single channel, audio. In the beginning of film sound, only one speaker was used to present the sound track for a movie. This meant that all the audio would only be heard from the center of the screen – no fancy surround or stereo panning.

5.1 AUDIO: Theatrical audio uses surround sound to immerse a user into a movie. It puts some sound effects in rear speakers to make sounds move from the back of the audience to the front, and vice versa for a desired effect. (For example, a plane flying from behind the audience towards the front of the audience as the plane appears on screen doing the same.) There are many surround formats from 5.1 to 6.1 to 8.1.For streaming video and home theatre usage, we typically only deal with 5.1 audio. Each channel in a multi-channel audio mix represents a speaker in the listening environment of the theatre or home entertainment system.

The 5 in 5.1 stands for the following 5 discreet channels of audio:

Channel 1: Front Left

Channel 2: Front Right

Channel 3: Center

Channel 4: Rear Left Surround

Channel 5: Rear Right Surround

And the .1 stands for the LFE, or Low Frequency Effect channel, as the speaker for this channel of audio is only designed to carry low frequencies below 120 Hz.

AUDIO – SOURCE FILES

Source file audio can be very different than the resulting streaming variant audio. A source file may have multiple audio streams with multiple channels of audio, whereas a streaming variant/flavor will typically only have one 2-Channel Stereo stream. When multi-channel audio sources are used it may be necessary to downmix or otherwise map the audio so that the resulting transcodes are true 2-Channel Stereo. When a transcoded file is created, it takes typically the first Audio Stream and the first 2 Channels of that Audio Stream and outputs a single 2-Channel Stereo Stream.

It can be easy to make a mistake and grab only the Front Left and Front Right Channels from a 5.1 audio source and create a 2-Channel output. The problem with this scenario is that the Front Left and Front Right channels typically do not contain Dialog in a true 5.1 mix. So if you do use the Front Left and Front Right to create a stereo output it might contain little to no actual dialog – which is, of course, not a good thing.

It is vital to look at your source formatting when creating a transcoding strategy. There is not much consistency in the industry for source audio formatting. The layout of a source file may vary greatly from vendor to vendor or even from department to department within a single organization.

And 5.1 audio is not the only usage of multi-channel sources. A studio might create a video master that also contains a number of different language dubs that the film/content is meant to be heard in.

So a single source file meant for North America might be formatted thusly:

Stream #1: Video

Stream #2: English 2-Channel Stereo

Stream #3: Spanish Mono

Stream #4: French 2-Channel Stereo

However, another source file might have this layout instead:

Stream #1: Video

Stream #2: Front Left

Stream #3: Front Right

Stream #4: Center

Stream #5: Rear Left Surround

Stream #6: Rear Right Surround

Stream #7: Stereo Downmix

Stream #8: Commentary

A Transcoding engine will need to be told how to map these different audio source types correctly to output a file that can be streamed.

MULTI-AUDIO PLAYBACK

Modern streaming players can handle multi-audio on the fly switching, so it is now common to find multi-language audio sources where each stream needs to be pulled and transcoded into a stand alone, audio only variant/flavor. The video and audio will be re-synced once in the player. This means that an adaptive set for multi-audio content might include 6-8 video variants/flavors and an additional 2-8 audio only variants representing the different languages or audio types (commentary, visual impaired audio).

DEVICES

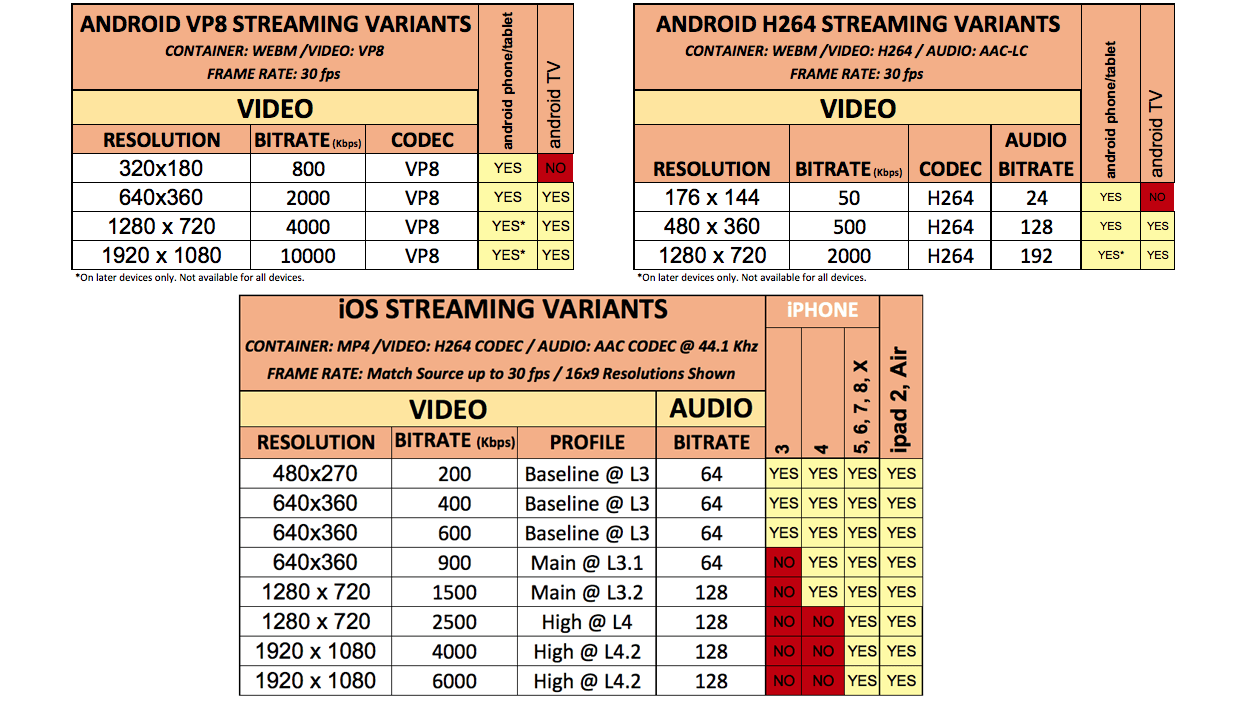

As mentioned in a previous section, not all settings apply to all devices. In 2018, there are a number of different devices and means by which video might be streamed. These range from the traditional laptop or desktop computer to smart phone or even to your home Smart TV. Video game systems also stream video today, as well as Blu-Ray players and other connected devices. Bitrates, profile levels ,and resolution spreads all differ on these devices with some cross over. In order to reach all devices, custom variants will need to be created with specific device tags.

Devices fall into the following 3 primary categories:

- Desktop

- Mobile

- Connected Device

What is great about 2018 Streaming Video and HTML 5 is that the same variants, if you are thoughtful about how your format them, can be served up into multiple devices. In the early days of streaming, a desktop might be pulling VP6 (an antiquated streaming format) but an early smart phone might be streaming an MP4 file. Now both use cases can access the same MP4.

DESKTOP

Desktop refers to either a home tower-type computer or a laptop that utilizes a browser to access the internet over either a wired Broadband connection (Ethernet) or a WiFi network. The limitations of a desktop environment extend to the computing power of the individual device given its age and operating software. Older desktops and laptops will have slower speeds and capability compared to a newer system.

Video transcoding settings, however are fairly flexible with desktop and laptops being able to handle all profile levels and resolutions up to 1080p. (4K is still limited in its streaming usage as of this writing and most computers still struggle streaming full 4K.)

MOBILE

A smart phone or tablet in 2018 can stream 1080 content easily and even can record in 4K at 50 Mbps. However, there are several limitations and recommendations from device manufacturers in order to best use their devices.

GOP Size is recommended to be around 2-3 seconds, meaning a video at 30 fps would have a GOP size of 60 frames to 90 frames. As mentioned, key-frames, when playing back, are considered IDR frames (Instantaneous Decoder Refresh) and are used as adaptive switch points and seek points for jumping around the video’s timeline (skipping forward or back, etc.).

Resolutions and profiles/levels also must be carefully considered. The age of the device might mean it can only support SD variants or even only outdated formats like 3GP (used in early mobile phones with video).

Apple’s HLS spec also calls for the use of an audio-only flavor in case the users signal drops so low that streaming video is no longer possible – this assures the player keeps playing and can then adapt back up to a higher resolution that includes video.

CONNECTED DEVICES

Connected devices are those that plug into your home television. This also covers Smart TV’s. This is a growing category of device that has, unfortunately, a wide variety of variables that differ from use case to use case. Not all devices are the same. The specs for an Apple TV are different than those for a Roku. When newer models come out, the specs for each change. That means you might need to account for both older and newer models.

Other connected devices include Amazon Firestick, Chromecast, and Gaming Consoles.

GAMING SYSTEMS

Xbox One, Xbox 360 and PS4 all can stream video. In fact, the streaming on these devices can be superior to that of a desktop since each device is a dedicated video decoder and system meant primarily for video and audio playback.

However, each device has different settings that have to be closely aligned – especially when it comes to profiles and levels as well as frame rates. Some gaming consoles might require only baseline profile whereas others might require all variants use high profile. Frame sizes may also differ> For example, Xbox requires both full screen sizes and a smaller frame for when inset into their menus. Xbox also requires only high profile whereas other systems require main or baseline only. Streaming to gaming consoles requires customization and finesse.

SMART TVs

Smart TV’s are not as smart as other devices just yet. They are getting better and better but the built-in memory of each television is still limited, causing apps to sometimes load slowly or other playback issues. Two primary manufacturers dominate this market right now. Sony and Samsung Smart TVs both use similar settings. Typically, simpler profile levels are required.

I hope you’ve enjoyed this series of blogs detailing some of the transcoding settings and concepts that guide our decisions on how flavors are created. Read the full Best Practices of Multi Device Transcoding (2018 Edition) for even more detail including subtitles, video artifacts, and other variant formatting settings. Now let’s hope the Dodgers can win next year. (Why put Madson in? Why?)

Was this post useful?

Thank you for your feedback!